By 2045, Humans May No Longer Be the Top Species on Earth

The news: In just over 30 years, there's a good chance the human race will be bowing down to a new species of overlords: robots.

In a recent interview with Business Insider, physicist Louis Del Monte said he believes the singularity — the point in the future when machine intelligence will outmatch even the smartest humans — is approaching quickly and will be here sooner than we think.

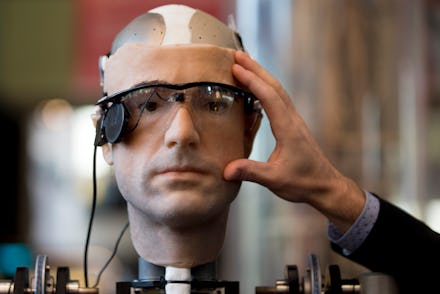

"By the end of this century, most of the human race will have become cyborgs [part human, part tech or machine]," Del Monte told Business Insider. "The allure will be immortality. Machines will make breakthroughs in medical technology, most of the human race will have more leisure time, and we'll think we've never had it better. The concern I'm raising is that the machines will view us as an unpredictable and dangerous species."

"[Humanity is] unstable, creates wars, has weapons to wipe out the world twice over and makes computer viruses," he says. None of these are necessarily ideal traits in a potential neighbor and Del Monte warns that intelligent machines could assert their independence or even attempt to convince people to augment themselves with superior synthetic parts.

So, is this for real? Barring major setbacks in technology, humanity seems to be on the road toward creating true artificial intelligences. Depending on who you ask, the point at which that seems likely to happen is either predictable or complete conjecture. Google Director of Engineering Ray Kurzweil, for example, thinks the 2045 date is spot on and is inevitable due to the progression of processing power, which doubles every 18 months.

But skeptics like experimental psychologist and cognitive scientist Steven Pinker think that all this futurism is a little silly. In 2008, he told IEEE Spectrum:

"There is not the slightest reason to believe in a coming singularity. The fact that you can visualize a future in your imagination is not evidence that it is likely or even possible. Look at domed cities, jet-pack commuting, underwater cities, mile-high buildings and nuclear-powered automobiles — all staples of futuristic fantasies when I was a child that have never arrived. Sheer processing power is not a pixie dust that magically solves all your problems."

In 2006, the Economist poked more holes in Kurzweil's thesis by applying the processor logic to the number of blades on a razor.

There are, of course, other criticisms of the idea of singularity. For one, it's very possible humanity will outpace technological progression and collapse in on itself from unemployment before AI is developed to the requisite point. Additionally, machines wouldn't become the planet's dominant species overnight — they'd depend on billions of humans to develop, maintain and support them. George Dvorsky, of io9, makes a compelling argument: "Being twice as smart as a human doesn't suddenly mean you can make yourself infinitely smart."

Still ... Even if a shift in human consciousness isn't imminent, autonomous machines will create their own challenges even if they aren't intelligent. The UN is already debating whether to ban the use of military robots that could self-select their own targets. Expert systems that exceed human capabilities in one area but fail to understand others could be potentially catastrophic; a smart system organizing high-frequency stock trading, for example, could operate so fast it destabilizes the market (this actually happened in 2010).

People could also fail to understand the systems they've created, especially if they autonomously improve themselves. Watson, a computer program created to beat the best human Jeopardy! players, is not completely understood by its own developers. While technology might indeed solve many of our problems, it will almost certainly create unprecedented challenges. The important thing is to understand those challenges before we're left with the most terrifying (and arguably awesome) machines possible: